by Douglas Broom*

Artificial intelligence (AI) promises to deliver significant benefits to businesses and society – but it also has the potential to cause significant harm if we fail to understand the risks that the technology poses.

That’s the view of Chief Risk Officers (CROs) from major corporations and international organizations who participated in the World Economic Forum’s Global Risks Outlook Survey.

The survey is detailed in the Forum’s mid-year Chief Risk Officers Outlook, which warns that risk management is not keeping up with the rapid advances in AI technologies.

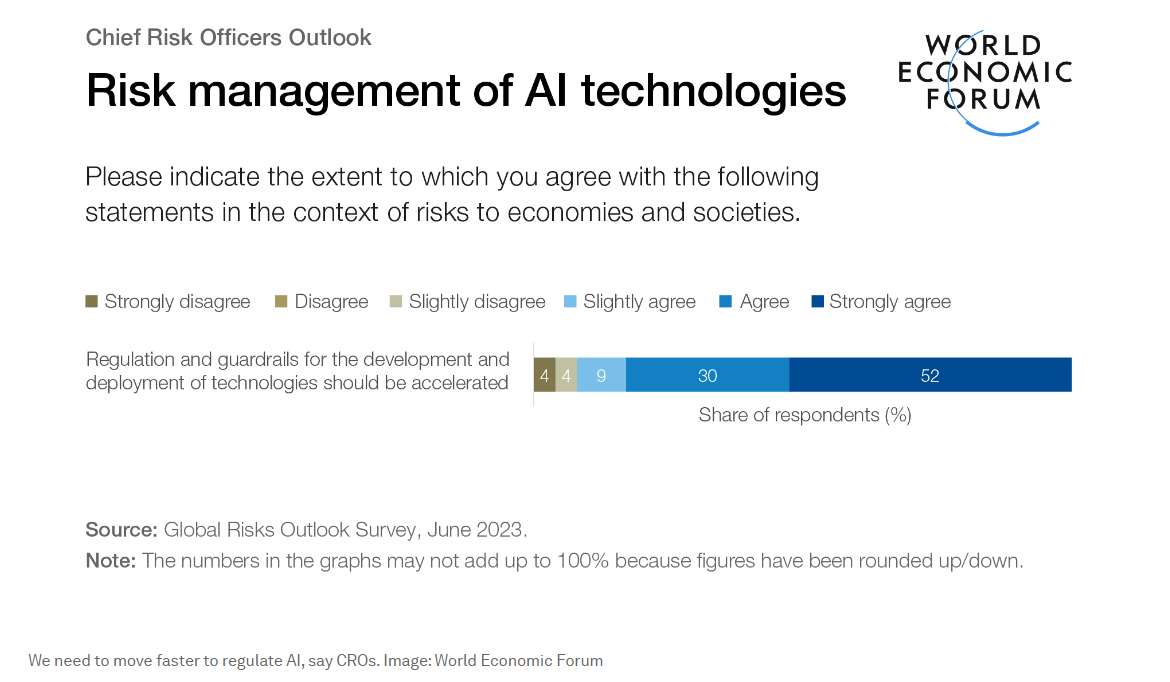

Three-quarters of the CROs surveyed said that the use of AI poses a reputational risk to their organization, while nine out of ten said more needed to be done to regulate the development and use of AI.

Almost half were in favour of pausing or slowing down the development of new AI technologies until the risks are better understood. AI “creates a complex and uncertain environment in which organizations must operate,” the report says.

“Recent months have seen a sharp increase in discussion of technology-related risks, particularly in the context of a surge of interest in the exponential advances being made by generative AI technologies.”

Significant benefits and significant harms

Although AI technologies have the potential to provide “significant benefits” in sectors like agriculture, education and healthcare, “they also have the power to cause significant harms,” the report says.

Among the biggest risks identified by the CROs is malicious use of AI. Because they are easy to use, generative AI technologies can be open to abuse by people seeking to spread misinformation, facilitate cyber attacks or access sensitive personal data.

What makes AI a serious risk is its “opaque inner workings”. No one, the report says, fully understands how AI content is created. This increases the risks highlighted by the CROs of inadvertent sharing of personal data and bias in decision-making based on AI algorithms.

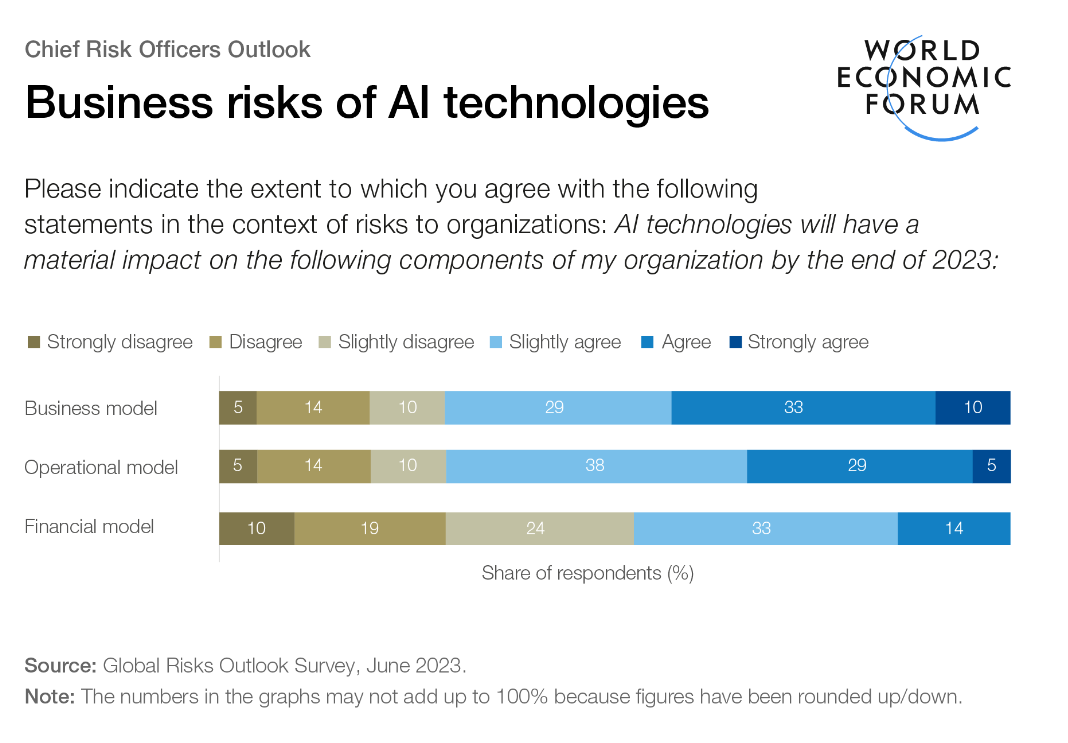

The lack of clarity about how AI works also makes it hard to anticipate future risks, the report adds, but the CROs say the areas of business most at risk from AI are operations, business models and strategies.

All of those surveyed agreed that the development of AI was outpacing their ability to manage its potential ethical and societal risks – and 43% said the development of new AI technologies should be paused or slowed until its potential impact was better understood.

Regulating AI development

Over half of the CROs said they understood how regulation might affect their organization and 90% said that efforts to regulate the development of AI needed to be accelerated.

More than half are planning to conduct an audit of the AI already in use in their organizations to assess its safety, legality and “ethical soundness”, although some said senior management were unwilling to view AI as a business risk.

Peter Giger, Group Chief Risk Officer at Zurich Insurance Group and one of the CROs who contributed to the Outlook report, says it’s wrong to ignore the risks, but businesses need to take a wider, longer-term approach.

Over half of the CROs said they understood how regulation might affect their organization and 90% said that efforts to regulate the development of AI needed to be accelerated.

“Too much and too-narrow a focus on the risks that are likely to dominate over the next six months or so can lead us into being easily distracted from dealing with the big risks that will determine the future,” he said.

“AI offers a good example, favouring the long-term-thinking approach. Will AI disrupt everyone’s lives today? Probably not. It’s, for many of us, not an immediate threat. But ignoring the implications and trends that AI is going to bring with it over time would be a massive mistake.”

Guidance for responsible AI use

In June, the Forum published recommendations for the responsible development of AI which urged developers to be more open and transparent and to use more precise and shared terminology.

The guidance also called for the tech industry to do more to increase public understanding of AI capabilities and limitations and to build trust by taking greater account of society’s concerns and user feedback.

*Senior Writer, Forum Agenda

**first published in: Weforum.org

By: N. Peter Kramer

By: N. Peter Kramer